JMP | Statistical Discovery.™ From SAS.

Statistics Knowledge Portal

A free online introduction to statistics

Chi-Square Test of Independence

What is the chi-square test of independence.

The Chi-square test of independence is a statistical hypothesis test used to determine whether two categorical or nominal variables are likely to be related or not.

When can I use the test?

You can use the test when you have counts of values for two categorical variables.

Can I use the test if I have frequency counts in a table?

Yes. If you have only a table of values that shows frequency counts, you can use the test.

Using the Chi-square test of independence

See how to perform a chi-square test of independence using statistical software.

- Download JMP to follow along using the sample data included with the software.

- To see more JMP tutorials, visit the JMP Learning Library .

The Chi-square test of independence checks whether two variables are likely to be related or not. We have counts for two categorical or nominal variables. We also have an idea that the two variables are not related. The test gives us a way to decide if our idea is plausible or not.

The sections below discuss what we need for the test, how to do the test, understanding results, statistical details and understanding p-values.

What do we need?

For the Chi-square test of independence, we need two variables. Our idea is that the variables are not related. Here are a couple of examples:

- We have a list of movie genres; this is our first variable. Our second variable is whether or not the patrons of those genres bought snacks at the theater. Our idea (or, in statistical terms, our null hypothesis) is that the type of movie and whether or not people bought snacks are unrelated. The owner of the movie theater wants to estimate how many snacks to buy. If movie type and snack purchases are unrelated, estimating will be simpler than if the movie types impact snack sales.

- A veterinary clinic has a list of dog breeds they see as patients. The second variable is whether owners feed dry food, canned food or a mixture. Our idea is that the dog breed and types of food are unrelated. If this is true, then the clinic can order food based only on the total number of dogs, without consideration for the breeds.

For a valid test, we need:

- Data values that are a simple random sample from the population of interest.

- Two categorical or nominal variables. Don't use the independence test with continous variables that define the category combinations. However, the counts for the combinations of the two categorical variables will be continuous.

- For each combination of the levels of the two variables, we need at least five expected values. When we have fewer than five for any one combination, the test results are not reliable.

Chi-square test of independence example

Let’s take a closer look at the movie snacks example. Suppose we collect data for 600 people at our theater. For each person, we know the type of movie they saw and whether or not they bought snacks.

Let’s start by answering: Is the Chi-square test of independence an appropriate method to evaluate the relationship between movie type and snack purchases?

- We have a simple random sample of 600 people who saw a movie at our theater. We meet this requirement.

- Our variables are the movie type and whether or not snacks were purchased. Both variables are categorical. We meet this requirement.

- The last requirement is for more than five expected values for each combination of the two variables. To confirm this, we need to know the total counts for each type of movie and the total counts for whether snacks were bought or not. For now, we assume we meet this requirement and will check it later.

It appears we have indeed selected a valid method. (We still need to check that more than five values are expected for each combination.)

Here is our data summarized in a contingency table:

Table 1: Contingency table for movie snacks data

| Action | 50 | 75 |

| Comedy | 125 | 175 |

| Family | 90 | 30 |

| Horror | 45 | 10 |

Before we go any further, let’s check the assumption of five expected values in each category. The data has more than five counts in each combination of Movie Type and Snacks. But what are the expected counts if movie type and snack purchases are independent?

Finding expected counts

To find expected counts for each Movie-Snack combination, we first need the row and column totals, which are shown below:

Table 2: Contingency table for movie snacks data with row and column totals

| Type of Movie | Snacks | No Snacks | |

| Action | 50 | 75 | |

| Comedy | 125 | 175 | |

| Family | 90 | 30 | |

| Horror | 45 | 10 | |

The expected counts for each Movie-Snack combination are based on the row and column totals. We multiply the row total by the column total and then divide by the grand total. This gives us the expected count for each cell in the table. For example, for the Action-Snacks cell, we have:

$ \frac{125\times310}{600} = \frac{38,750}{600} = 65 $

We rounded the answer to the nearest whole number. If there is not a relationship between movie type and snack purchasing we would expect 65 people to have watched an action film with snacks.

Here are the actual and expected counts for each Movie-Snack combination. In each cell of Table 3 below, the expected count appears in bold beneath the actual count. The expected counts are rounded to the nearest whole number.

Table 3: Contingency table for movie snacks data showing actual count vs. expected count

| Type of Movie | Snacks | No Snacks | Row totals |

| Action | 50 | 75 | 125 |

| Comedy | 125 | 175 | 300 |

| Family | 90 | 30 | 120 |

| Horror | 45 | 10 | 55 |

| Column totals | 310 | 290 | GRAND TOTAL = 600 |

When using software, these calculated values will be labeled as “expected values,” “expected cell counts” or some similar term.

All of the expected counts for our data are larger than five, so we meet the requirement for applying the independence test.

Before calculating the test statistic, let’s look at the contingency table again. The expected counts use the row and column totals. If we look at each of the cells, we can see that some expected counts are close to the actual counts but most are not. If there is no relationship between the movie type and snack purchases, the actual and expected counts will be similar. If there is a relationship, the actual and expected counts will be different.

A common mistake with expected counts is to simply divide the grand total by the number of cells. For our movie data, this is 600 / 8 = 75. This is not correct. We know the row totals and column totals. These are fixed and cannot change for our data. The expected values are based on the row and column totals, not just on the grand total.

Performing the test

The basic idea in calculating the test statistic is to compare actual and expected values, given the row and column totals that we have in the data. First, we calculate the difference from actual and expected for each Movie-Snacks combination. Next, we square that difference. Squaring gives the same importance to combinations with fewer actual values than expected and combinations with more actual values than expected. Next, we divide by the expected value for the combination. We add up these values for each Movie-Snacks combination. This gives us our test statistic.

This is much easier to follow using the data from our example. Table 4 below shows the calculations for each Movie-Snacks combination carried out to two decimal places.

Table 4: Preparing to calculate our test statistic

| Type of Movie | Snack | No Snacks |

| Action | Actual: 50 | Actual: 75 |

Difference: 50 – 64.58 = -14.58 Squared Difference: 212.67 Divide by Expected: 212.67/64.58 = 3.29 | Difference: 75 – 60.42 = 14.58 Squared Difference: 212.67 Divide by Expected: 212.67/60.42 = 3.52 | |

| Comedy | Actual: 125 | Actual 175 |

Difference: 125 – 155 = -30 Squared Difference: 900 Divide by Expected: 900/155 = 5.81 | Difference: 175 – 145 = 30 Squared Difference: 900 Divide by Expected: 900/145 = 6.21 | |

| Family | Actual: 90 | Actual: 30 |

Difference: 90 – 62 = 28 Squared Difference: 784 Divide by Expected: 784/62 = 12.65 | Difference: 30 – 58 = -28 Squared Difference: 784 Divide by Expected: 784/58 = 13.52 | |

| Horror | Actual: 45 | Actual: 10 |

Difference: 45 – 28.42 = 16.58 Squared Difference: 275.01 Divide by Expected: 275.01/28.42 = 9.68 | Difference: 10 – 26.58 = -16.58 Squared Difference: 275.01 Divide by Expected: 275.01/26.58 = 10.35 |

Lastly, to get our test statistic, we add the numbers in the final row for each cell:

$ 3.29 + 3.52 + 5.81 + 6.21 + 12.65 + 13.52 + 9.68 + 10.35 = 65.03 $

To make our decision, we compare the test statistic to a value from the Chi-square distribution . This activity involves five steps:

- We decide on the risk we are willing to take of concluding that the two variables are not independent when in fact they are. For the movie data, we had decided prior to our data collection that we are willing to take a 5% risk of saying that the two variables – Movie Type and Snack Purchase – are not independent when they really are independent. In statistics-speak, we set the significance level, α, to 0.05.

- We calculate a test statistic. As shown above, our test statistic is 65.03.

- We find the critical value from the Chi-square distribution based on our degrees of freedom and our significance level. This is the value we expect if the two variables are independent.

- The degrees of freedom depend on how many rows and how many columns we have. The degrees of freedom (df) are calculated as: $ \text{df} = (r-1)\times(c-1) $ In the formula, r is the number of rows, and c is the number of columns in our contingency table. From our example, with Movie Type as the rows and Snack Purchase as the columns, we have: $ \text{df} = (4-1)\times(2-1) = 3\times1 = 3 $ The Chi-square value with α = 0.05 and three degrees of freedom is 7.815.

- We compare the value of our test statistic (65.03) to the Chi-square value. Since 65.03 > 7.815, we reject the idea that movie type and snack purchases are independent.

We conclude that there is some relationship between movie type and snack purchases. The owner of the movie theater cannot estimate how many snacks to buy regardless of the type of movies being shown. Instead, the owner must think about the type of movies being shown when estimating snack purchases.

It's important to note that we cannot conclude that the type of movie causes a snack purchase. The independence test tells us only whether there is a relationship or not; it does not tell us that one variable causes the other.

Understanding results

Let’s use graphs to understand the test and the results.

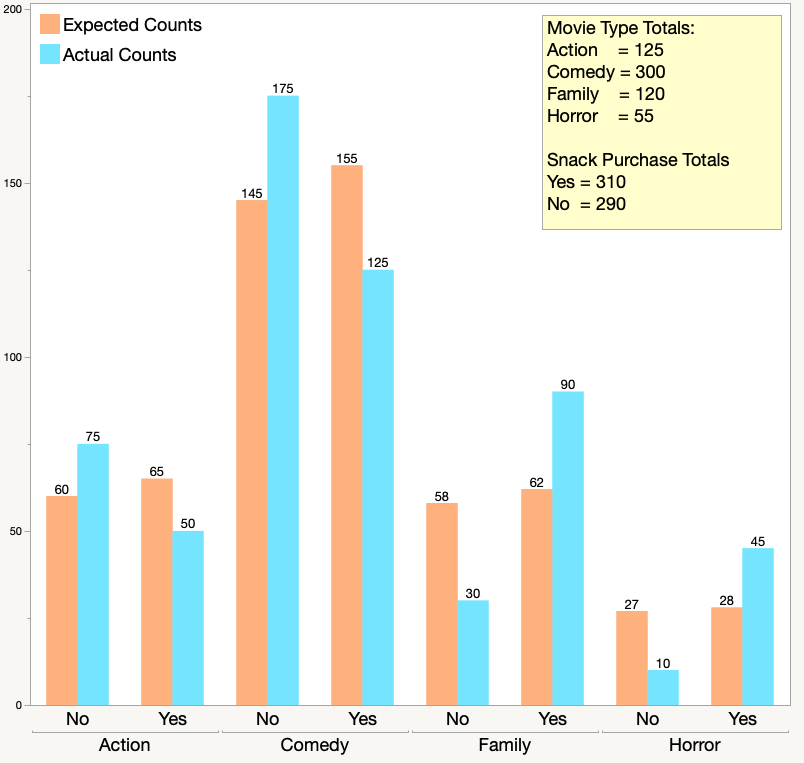

The side-by-side chart below shows the actual counts in blue, and the expected counts in orange. The counts appear at the top of the bars. The yellow box shows the movie type and snack purchase totals. These totals are needed to find the expected counts.

Compare the expected and actual counts for the Horror movies. You can see that more people than expected bought snacks and fewer people than expected chose not to buy snacks.

If you look across all four of the movie types and whether or not people bought snacks, you can see that there is a fairly large difference between actual and expected counts for most combinations. The independence test checks to see if the actual data is “close enough” to the expected counts that would occur if the two variables are independent. Even without a statistical test, most people would say that the two variables are not independent. The statistical test provides a common way to make the decision, so that everyone makes the same decision on the data.

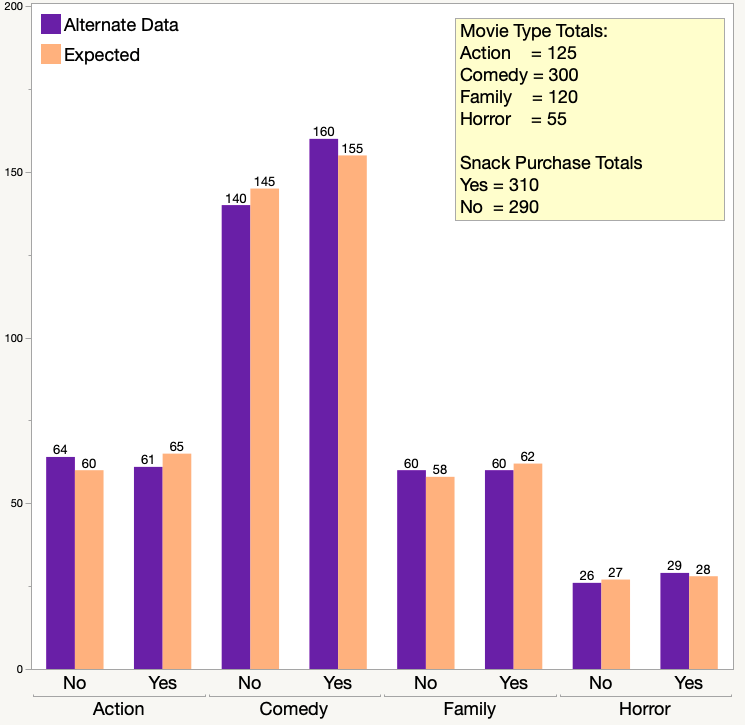

The chart below shows another possible set of data. This set has the exact same row and column totals for movie type and snack purchase, but the yes/no splits in the snack purchase data are different.

The purple bars show the actual counts in this data. The orange bars show the expected counts, which are the same as in our original data set. The expected counts are the same because the row totals and column totals are the same. Looking at the graph above, most people would think that the type of movie and snack purchases are independent. If you perform the Chi-square test of independence using this new data, the test statistic is 0.903. The Chi-square value is still 7.815 because the degrees of freedom are still three. You would fail to reject the idea of independence because 0.903 < 7.815. The owner of the movie theater can estimate how many snacks to buy regardless of the type of movies being shown.

Statistical details

Let’s look at the movie-snack data and the Chi-square test of independence using statistical terms.

Our null hypothesis is that the type of movie and snack purchases are independent. The null hypothesis is written as:

$ H_0: \text{Movie Type and Snack purchases are independent} $

The alternative hypothesis is the opposite.

$ H_a: \text{Movie Type and Snack purchases are not independent} $

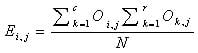

Before we calculate the test statistic, we find the expected counts. This is written as:

$ Σ_{ij} = \frac{R_i\times{C_j}}{N} $

The formula is for an i x j contingency table. That is a table with i rows and j columns. For example, E 11 is the expected count for the cell in the first row and first column. The formula shows R i as the row total for the i th row, and C j as the column total for the j th row. The overall sample size is N .

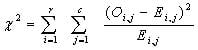

We calculate the test statistic using the formula below:

$ Σ^n_{i,j=1} = \frac{(O_{ij}-E_{ij})^2}{E_{ij}} $

In the formula above, we have n combinations of rows and columns. The Σ symbol means to add up the calculations for each combination. (We performed these same steps in the Movie-Snack example, beginning in Table 4.) The formula shows O ij as the Observed count for the ij -th combination and E i j as the Expected count for the combination. For the Movie-Snack example, we had four rows and two columns, so we had eight combinations.

We then compare the test statistic to the critical Chi-square value corresponding to our chosen alpha value and the degrees of freedom for our data. Using the Movie-Snack data as an example, we had set α = 0.05 and had three degrees of freedom. For the Movie-Snack data, the Chi-square value is written as:

$ χ_{0.05,3}^2 $

There are two possible results from our comparison:

- The test statistic is lower than the Chi-square value. You fail to reject the hypothesis of independence. In the movie-snack example, the theater owner can go ahead with the assumption that the type of movie a person sees has no relationship with whether or not they buy snacks.

- The test statistic is higher than the Chi-square value. You reject the hypothesis of independence. In the movie-snack example, the theater owner cannot assume that there is no relationship between the type of movie a person sees and whether or not they buy snacks.

Understanding p-values

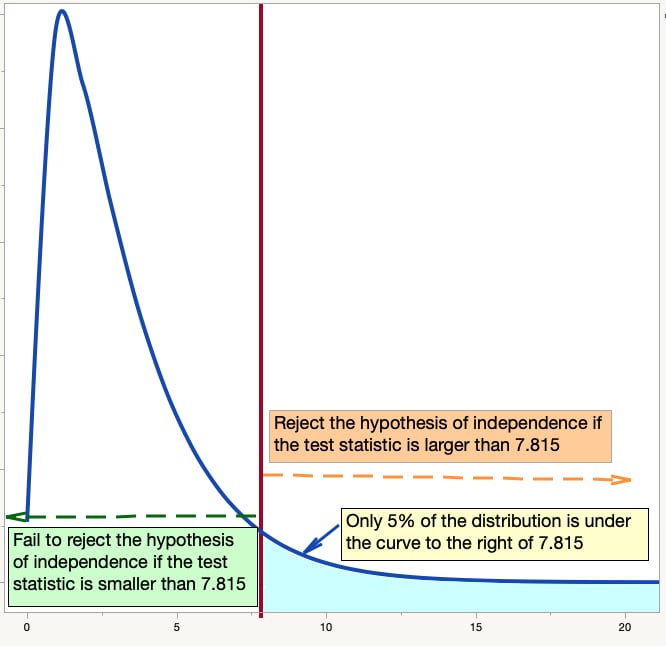

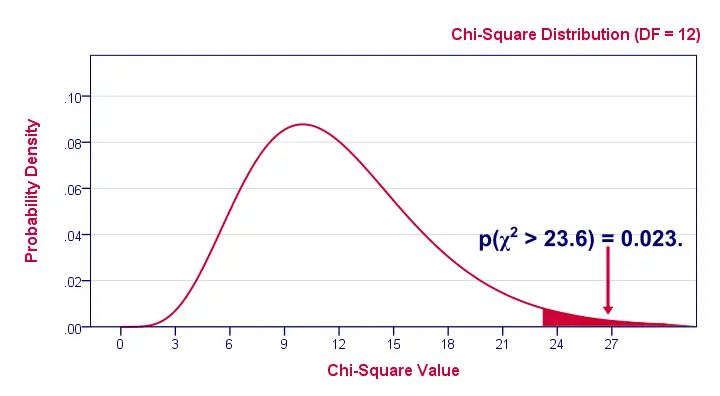

Let’s use a graph of the Chi-square distribution to better understand the p-values. You are checking to see if your test statistic is a more extreme value in the distribution than the critical value. The graph below shows a Chi-square distribution with three degrees of freedom. It shows how the value of 7.815 “cuts off” 95% of the data. Only 5% of the data from a Chi-square distribution with three degrees of freedom is greater than 7.815.

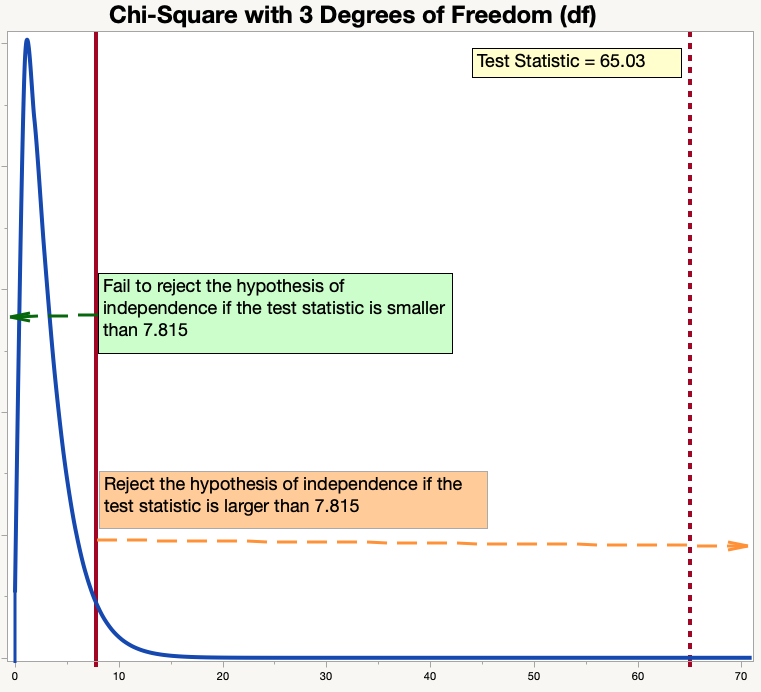

The next distribution graph shows our results. You can see how far out “in the tail” our test statistic is. In fact, with this scale, it looks like the distribution curve is at zero at the point at which it intersects with our test statistic. It isn’t, but it is very, very close to zero. We conclude that it is very unlikely for this situation to happen by chance. The results that we collected from our movie goers would be extremely unlikely if there were truly no relationship between types of movies and snack purchases.

Statistical software shows the p-value for a test. This is the likelihood of another sample of the same size resulting in a test statistic more extreme than the test statistic from our current sample, assuming that the null hypothesis is true. It’s difficult to calculate this by hand. For the distributions shown above, if the test statistic is exactly 7.815, then the p - value will be p=0.05. With the test statistic of 65.03, the p - value is very, very small. In this example, most statistical software will report the p - value as “p < 0.0001.” This means that the likelihood of finding a more extreme value for the test statistic using another random sample (and assuming that the null hypothesis is correct) is less than one chance in 10,000.

Have a language expert improve your writing

Run a free plagiarism check in 10 minutes, generate accurate citations for free.

- Knowledge Base

- Chi-Square (Χ²) Tests | Types, Formula & Examples

Chi-Square (Χ²) Tests | Types, Formula & Examples

Published on May 23, 2022 by Shaun Turney . Revised on June 22, 2023.

A Pearson’s chi-square test is a statistical test for categorical data. It is used to determine whether your data are significantly different from what you expected. There are two types of Pearson’s chi-square tests:

- The chi-square goodness of fit test is used to test whether the frequency distribution of a categorical variable is different from your expectations.

- The chi-square test of independence is used to test whether two categorical variables are related to each other.

Table of contents

What is a chi-square test, the chi-square formula, when to use a chi-square test, types of chi-square tests, how to perform a chi-square test, how to report a chi-square test, practice questions, other interesting articles, frequently asked questions about chi-square tests.

Pearson’s chi-square (Χ 2 ) tests, often referred to simply as chi-square tests, are among the most common nonparametric tests . Nonparametric tests are used for data that don’t follow the assumptions of parametric tests , especially the assumption of a normal distribution .

If you want to test a hypothesis about the distribution of a categorical variable you’ll need to use a chi-square test or another nonparametric test. Categorical variables can be nominal or ordinal and represent groupings such as species or nationalities. Because they can only have a few specific values, they can’t have a normal distribution.

Test hypotheses about frequency distributions

There are two types of Pearson’s chi-square tests, but they both test whether the observed frequency distribution of a categorical variable is significantly different from its expected frequency distribution. A frequency distribution describes how observations are distributed between different groups.

Frequency distributions are often displayed using frequency distribution tables . A frequency distribution table shows the number of observations in each group. When there are two categorical variables, you can use a specific type of frequency distribution table called a contingency table to show the number of observations in each combination of groups.

| Bird species | Frequency |

|---|---|

| House sparrow | 15 |

| House finch | 12 |

| Black-capped chickadee | 9 |

| Common grackle | 8 |

| European starling | 8 |

| Mourning dove | 6 |

| Right-handed | Left-handed | |

|---|---|---|

| American | 236 | 19 |

| Canadian | 157 | 16 |

Prevent plagiarism. Run a free check.

Both of Pearson’s chi-square tests use the same formula to calculate the test statistic , chi-square (Χ 2 ):

- Χ 2 is the chi-square test statistic

- Σ is the summation operator (it means “take the sum of”)

- O is the observed frequency

- E is the expected frequency

The larger the difference between the observations and the expectations ( O − E in the equation), the bigger the chi-square will be. To decide whether the difference is big enough to be statistically significant , you compare the chi-square value to a critical value.

A Pearson’s chi-square test may be an appropriate option for your data if all of the following are true:

- You want to test a hypothesis about one or more categorical variables . If one or more of your variables is quantitative, you should use a different statistical test . Alternatively, you could convert the quantitative variable into a categorical variable by separating the observations into intervals.

- The sample was randomly selected from the population .

- There are a minimum of five observations expected in each group or combination of groups.

The two types of Pearson’s chi-square tests are:

Chi-square goodness of fit test

Chi-square test of independence.

Mathematically, these are actually the same test. However, we often think of them as different tests because they’re used for different purposes.

You can use a chi-square goodness of fit test when you have one categorical variable. It allows you to test whether the frequency distribution of the categorical variable is significantly different from your expectations. Often, but not always, the expectation is that the categories will have equal proportions.

- Null hypothesis ( H 0 ): The bird species visit the bird feeder in equal proportions.

- Alternative hypothesis ( H A ): The bird species visit the bird feeder in different proportions.

Expectation of different proportions

- Null hypothesis ( H 0 ): The bird species visit the bird feeder in the same proportions as the average over the past five years.

- Alternative hypothesis ( H A ): The bird species visit the bird feeder in different proportions from the average over the past five years.

You can use a chi-square test of independence when you have two categorical variables. It allows you to test whether the two variables are related to each other. If two variables are independent (unrelated), the probability of belonging to a certain group of one variable isn’t affected by the other variable .

- Null hypothesis ( H 0 ): The proportion of people who are left-handed is the same for Americans and Canadians.

- Alternative hypothesis ( H A ): The proportion of people who are left-handed differs between nationalities.

Other types of chi-square tests

Some consider the chi-square test of homogeneity to be another variety of Pearson’s chi-square test. It tests whether two populations come from the same distribution by determining whether the two populations have the same proportions as each other. You can consider it simply a different way of thinking about the chi-square test of independence.

McNemar’s test is a test that uses the chi-square test statistic. It isn’t a variety of Pearson’s chi-square test, but it’s closely related. You can conduct this test when you have a related pair of categorical variables that each have two groups. It allows you to determine whether the proportions of the variables are equal.

| Like chocolate | Dislike chocolate | |

|---|---|---|

| Like vanilla | 47 | 32 |

| Dislike vanilla | 8 | 13 |

- Null hypothesis ( H 0 ): The proportion of people who like chocolate is the same as the proportion of people who like vanilla.

- Alternative hypothesis ( H A ): The proportion of people who like chocolate is different from the proportion of people who like vanilla.

There are several other types of chi-square tests that are not Pearson’s chi-square tests, including the test of a single variance and the likelihood ratio chi-square test .

Receive feedback on language, structure, and formatting

Professional editors proofread and edit your paper by focusing on:

- Academic style

- Vague sentences

- Style consistency

See an example

The exact procedure for performing a Pearson’s chi-square test depends on which test you’re using, but it generally follows these steps:

- Create a table of the observed and expected frequencies. This can sometimes be the most difficult step because you will need to carefully consider which expected values are most appropriate for your null hypothesis.

- Calculate the chi-square value from your observed and expected frequencies using the chi-square formula.

- Find the critical chi-square value in a chi-square critical value table or using statistical software.

- Compare the chi-square value to the critical value to determine which is larger.

- Decide whether to reject the null hypothesis. You should reject the null hypothesis if the chi-square value is greater than the critical value. If you reject the null hypothesis, you can conclude that your data are significantly different from what you expected.

If you decide to include a Pearson’s chi-square test in your research paper , dissertation or thesis , you should report it in your results section . You can follow these rules if you want to report statistics in APA Style :

- You don’t need to provide a reference or formula since the chi-square test is a commonly used statistic.

- Refer to chi-square using its Greek symbol, Χ 2 . Although the symbol looks very similar to an “X” from the Latin alphabet, it’s actually a different symbol. Greek symbols should not be italicized.

- Include a space on either side of the equal sign.

- If your chi-square is less than zero, you should include a leading zero (a zero before the decimal point) since the chi-square can be greater than zero.

- Provide two significant digits after the decimal point.

- Report the chi-square alongside its degrees of freedom , sample size, and p value , following this format: Χ 2 (degrees of freedom, N = sample size) = chi-square value, p = p value).

If you want to know more about statistics , methodology , or research bias , make sure to check out some of our other articles with explanations and examples.

- Chi square test of independence

- Statistical power

- Descriptive statistics

- Degrees of freedom

- Pearson correlation

- Null hypothesis

Methodology

- Double-blind study

- Case-control study

- Research ethics

- Data collection

- Hypothesis testing

- Structured interviews

Research bias

- Hawthorne effect

- Unconscious bias

- Recall bias

- Halo effect

- Self-serving bias

- Information bias

The two main chi-square tests are the chi-square goodness of fit test and the chi-square test of independence .

Both chi-square tests and t tests can test for differences between two groups. However, a t test is used when you have a dependent quantitative variable and an independent categorical variable (with two groups). A chi-square test of independence is used when you have two categorical variables.

Both correlations and chi-square tests can test for relationships between two variables. However, a correlation is used when you have two quantitative variables and a chi-square test of independence is used when you have two categorical variables.

Quantitative variables are any variables where the data represent amounts (e.g. height, weight, or age).

Categorical variables are any variables where the data represent groups. This includes rankings (e.g. finishing places in a race), classifications (e.g. brands of cereal), and binary outcomes (e.g. coin flips).

You need to know what type of variables you are working with to choose the right statistical test for your data and interpret your results .

Cite this Scribbr article

If you want to cite this source, you can copy and paste the citation or click the “Cite this Scribbr article” button to automatically add the citation to our free Citation Generator.

Turney, S. (2023, June 22). Chi-Square (Χ²) Tests | Types, Formula & Examples. Scribbr. Retrieved August 14, 2024, from https://www.scribbr.com/statistics/chi-square-tests/

Is this article helpful?

Shaun Turney

Other students also liked, chi-square test of independence | formula, guide & examples, chi-square goodness of fit test | formula, guide & examples, chi-square (χ²) distributions | definition & examples, what is your plagiarism score.

- Skip to secondary menu

- Skip to main content

- Skip to primary sidebar

Statistics By Jim

Making statistics intuitive

How the Chi-Squared Test of Independence Works

By Jim Frost 21 Comments

Chi-squared tests of independence determine whether a relationship exists between two categorical variables . Do the values of one categorical variable depend on the value of the other categorical variable? If the two variables are independent, knowing the value of one variable provides no information about the value of the other variable.

I’ve previously written about Pearson’s chi-square test of independence using a fun Star Trek example . Are the uniform colors related to the chances of dying? You can test the notion that the infamous red shirts have a higher likelihood of dying. In that post, I focus on the purpose of the test, applied it to this example, and interpreted the results.

In this post, I’ll take a bit of a different approach. I’ll show you the nuts and bolts of how to calculate the expected values, chi-square value, and degrees of freedom. Then you’ll learn how to use the chi-squared distribution in conjunction with the degrees of freedom to calculate the p-value.

I’ve used the same approach to explain how:

- t-Tests work .

- F-tests work in one-way ANOVA .

Of course, you’ll usually just let your statistical software perform all calculations. However, understanding the underlying methodology helps you fully comprehend the analysis.

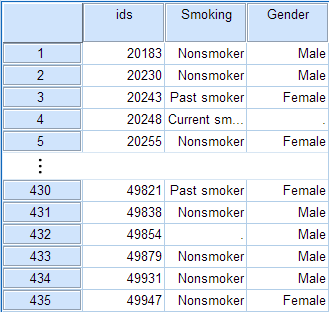

Chi-Squared Example Dataset

For the Star Trek example, uniform color and status are the two categorical variables. The contingency table below shows the combination of variable values, frequencies, and percentages.

| 7 | 9 | 24 | 40 | |

| 129 | 46 | 215 | 390 | |

| 136 | 55 | 239 | N = 430 | |

| 5.15% | 16.36% | 10.04% |

However, our fatality rates are not equal. Gold has the highest fatality rate at 16.36%, while Blue has the lowest at 5.15%. Red is in the middle at 10.04%. Does this inequality in our sample suggest that the fatality rates are different in the population? Does a relationship exist between uniform color and fatalities?

Thanks to random sampling error, our sample’s fatality rates don’t exactly equal the population’s rates. If the population rates are equal, we’d likely still see differences in our sample. So, the question becomes, after factoring in sampling error, are the fatality rates in our sample different enough to conclude that they’re different in the population? In other words, we want to be confident that the observed differences represent a relationship in the population rather than merely random fluctuations in the sample. That’s where Pearson’s chi-squared test for independence comes in!

Hypotheses for Our Test

The two hypotheses for the chi-squared test of independence are the following:

- Null : The variables are independent. No relationship exists.

- Alternative : A relationship between the variables exists.

Related posts : Hypothesis Testing Overview and Guide to Data Types

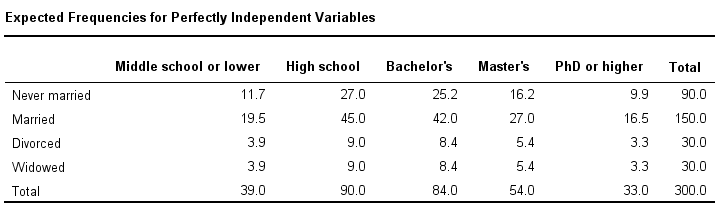

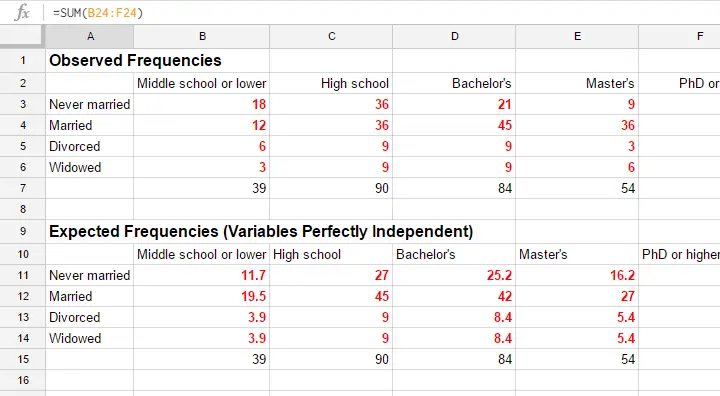

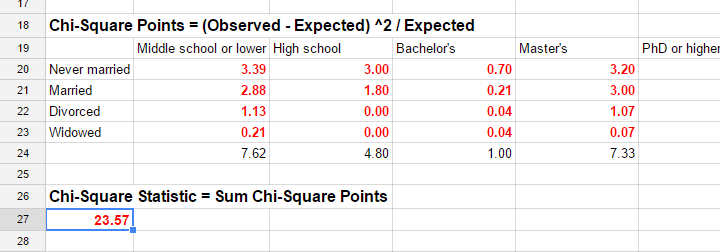

Calculating the Expected Frequencies for the Chi-squared Test of Independence

The chi-squared test of independence compares our sample data in the contingency table to the distribution of values we’d expect if the null hypothesis is correct. Let’s construct the contingency table we’d expect to see if the null hypothesis is true for our population.

For chi-squared tests, the term “expected frequencies” refers to the values we’d expect to see if the null hypothesis is true. To calculate the expected frequency for a specific combination of categorical variables (e.g., blue shirts who died), multiply the column total (Blue) by the row total (Dead), and divide by the sample size.

Row total X Column total / Sample Size = Expected value for one table cell

To calculate the expected frequency for the Dead/Blue cell in our dataset, do the following:

- Find the row total for Dead (40)

- Find the column total for Blue (136)

- Multiply those two values and divide by the sample size (430)

40 * 136 / 430 = 12.65

If the null hypothesis is true, we’d expect to see 12.65 fatalities for wearers of the Blue uniforms in our sample. Of course, we can’t have a fraction of a death, but that doesn’t affect the results.

Contingency Table with the Expected Values

I’ll calculate the expected values for all six cells that represent the combinations of the three uniform colors and two statuses. I’ll also include the observed values in our sample. Expected values are in parentheses.

| 7 (12.65) | 9 (5.12) | 24 (22.23) | 40 | |

| 129 (123.35) | 46 (49.88) | 215 (216.77) | 390 | |

| 9.3% | 9.3% | 9.3% |

In this table, notice how the column percentages for the expected dead are all 9.3%. This equality occurs when the null hypothesis is valid, which is the condition that the expected values represent.

Using this table, we can also compare the values we observe in our sample to the frequencies we’d expect if the null hypothesis that the variables are not related is correct.

For example, the observed frequency for Blue/Dead is less than the expected value (7 < 12.65). In our sample, deaths of those in blue uniforms occurred less frequently than we’d expect if the variables are independent. On the other hand, the observed frequency for Gold/Dead is greater than the expected value (9 > 5.12). Meanwhile, the observed frequency for Red/Dead approximately equals the expected value. This interpretation matches what we concluded by assessing the column percentages in the first contingency table.

Pearson’s chi-squared test works by mathematically comparing observed frequencies to the expected values and boiling all those differences down into one number. Let’s see how it does that!

Related post : Using Contingency Tables to Calculate Probabilities

Calculating the Chi-Squared Statistic

Most hypothesis tests calculate a test statistic. For example, t-tests use t-values and F-tests use F-values as their test statistics. These statistical tests compare your observed sample data to what you would expect if the null hypothesis is true. The calculations reduce your sample data down to one value that represents how different your data are from the null. Learn more about Test Statistics .

For chi-squared tests, the test statistic is, unsurprisingly, chi-squared, or χ 2 .

The chi-squared calculations involve a familiar concept in statistics—the sum of the squared differences between the observed and expected values. This concept is similar to how regression models assess goodness-of-fit using the sum of the squared differences.

Here’s the formula for chi-squared.

Let’s walk through it!

To calculate the chi-squared statistic, take the difference between a pair of observed (O) and expected values (E), square the difference, and divide that squared difference by the expected value. Repeat this process for all cells in your contingency table and sum those values. The resulting value is χ 2 . We’ll calculate it for our example data shortly!

Important Considerations about the Chi-Squared Statistic

Notice several important considerations about chi-squared values:

Zero represents the null hypothesis. If all your observed frequencies equal the expected frequencies exactly, the chi-squared value for each cell equals zero, and the overall chi-squared statistic equals zero. Zero indicates your sample data exactly match what you’d expect if the null hypothesis is correct.

Squaring the differences ensures both that cell values must be non-negative and that larger differences are weighted more than smaller differences. A cell can never subtract from the chi-squared value.

Larger values represent a greater difference between your sample data and the null hypothesis. Chi-squared tests are one-tailed tests rather than the more familiar two-tailed tests. The test determines whether the entire set of differences exceeds a significance threshold. If your χ 2 passes the limit, your results are statistically significant! You can reject the null hypothesis and conclude that the variables are dependent–a relationship exists.

Related post : One-tailed and Two-tailed Hypothesis Tests

Calculating Chi-Squared for our Example Data

Let’s calculate the chi-squared statistic for our example data! To do that, I’ll rearrange the contingency table, making it easier to illustrate how to calculate the sum of the squared differences.

The first two columns indicate the combination of categorical variable values. The next two are the observed and expected values that we calculated before. The last column is the squared difference divided by the expected value for each row. The bottom line sums those values.

Our chi-squared test statistic is 6.17. Ok, great. What does that mean? Larger values indicate a more substantial divergence between our observed data and the null hypothesis. However, the number by itself is not useful because we don’t know if it’s unusually large. We need to place it into a broader context to determine whether it is an extreme value.

Using the Chi-Squared Distribution to Test Hypotheses

One chi-squared test produces a single chi-squared value. However, imagine performing the following process.

First, assume the null hypothesis is valid for the population. At the population level, there is no relationship between the two categorical variables. Now, we’ll repeat our study many times by drawing many random samples from this population using the same design and sample size. Next, we perform the chi-squared test of independence on all the samples and plot the distribution of the chi-squared values. This distribution is known as a sampling distribution, which is a type of probability distribution.

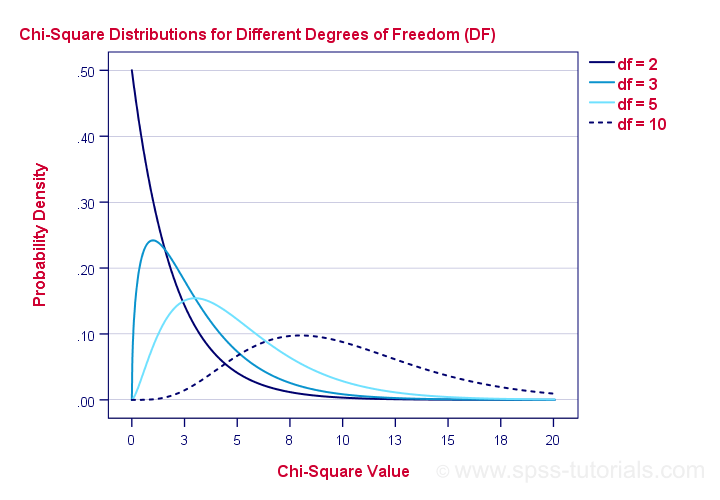

If we follow this procedure, we create a graph that displays the distribution of chi-squared values for a population where the null hypothesis is true. We use sampling distributions to calculate probabilities for how unlikely our sample statistic is if the null hypothesis is correct. Chi-squared tests use the chi-square distribution.

Fortunately, we don’t need to collect many random samples to create this graph! Statisticians understand the properties of chi-squared distributions so we can estimate the sampling distribution using the details of our design.

Our goal is to determine whether our sample chi-squared value is so rare that it justifies rejecting the null hypothesis for the entire population. The chi-squared distribution provides the context for making that determination. We’ll calculate the probability of obtaining a chi-squared value that is at least as high as the value that our study found (6.17).

This probability has a name—the P-value! A low probability indicates that our sample data are unlikely when the null hypothesis is true.

Alternatively, you can use a chi-square table to determine whether our study’s chi-square test statistic exceeds the critical value .

Related posts : Sampling Distributions , Understanding Probability Distributions and Interpreting P-values

Graphing the Chi-Squared Test Results for Our Example

For chi-squared tests, the degrees of freedom define the shape of the chi-squared distribution for a design. Chi-square tests use this distribution to calculate p-values. The graph below displays several chi-square distributions with differing degrees of freedom.

For a table with r rows and c columns, the method for calculating degrees of freedom for a chi-square test is (r-1) (c-1). For our example, we have two rows and three columns: (2-1) * (3-1) = 2 df.

Read my post about degrees of freedom to learn about this concept along with a more intuitive way of understanding degrees of freedom in chi-squared tests of independence.

Below is the chi-squared distribution for our study’s design.

The distribution curve displays the likelihood of chi-squared values for a population where there is no relationship between uniform color and status at the population level. I shaded the region that corresponds to chi-square values greater than or equal to our study’s value (6.17). When the null hypothesis is correct, chi-square values fall in this area approximately 4.6% of the time, which is the p-value (0.046). With a significance level of 0.05, our sample data are unusual enough to reject the null hypothesis.

The sample evidence suggests that a relationship between the variables exists in the population. While this test doesn’t indicate red shirts have a higher chance of dying, there is something else going on with red shirts. Read my other post chi-squared to learn about that !

Related Reading

When you have smaller sample sizes, you might need to use Fisher’s exact test instead of the chi-square version. To learn more, read my post, Fisher’s Exact Test: Using and Interpreting .

Learn more about How to Find the P Value .

You can also read about the chi-square goodness of fit test , which assesses the distribution of outcomes for a categorical or discrete variable.

Pearson’s chi-squared test for independence doesn’t tell you the effect size. To understand the strength of the relationship, you’d need to use something like Cramér’s V, which is a measure of association like Pearson’s correlation —except for categorical variables. That’s the topic of a future post!

Share this:

Reader Interactions

November 15, 2021 at 1:56 pm

Jim – I want to start by saying that I love your site. It has helped me out greatly during many occasions. In this particular example I am interested in understanding the logic around the math for the expected values. For example, can you explain how I should interpret scaling the total number dead by the total number blue?

From there I get that we divide by the total number of people to get the number of blue deaths expected within the group of 430 people. Is this a formula that is well known for contingency tables or did you apply that strictly for this scenario?

Hopefully this question made sense?

Either way, thanks for the contributing to the community!

November 16, 2021 at 11:48 am

I’m so glad to hear that my site has been helpful!

I’m not 100% sure what you’re asking, so I’m not sure if I’m answering your question. To start, the formulas are the standard ones for the chi-squared test of independence, which you use in conjunction with contingency tables. You’d use the same methods and formulas for other datasets.

The portion you’re asking about is how to calculate the expected number for blue deaths if there is no association between uniform color and deaths (i.e., the null hypothesis of the test is true). So, the interpretation of the value is: If there is no relationship between uniform color and deaths, we’d expect 12.6 fatalities among those wearing blue uniforms. The test as a whole compares these expected values (for all table cells) to the observed values to determine whether the data support rejecting the null hypothesis and concluding that there is a relationship between the variables.

April 22, 2021 at 7:38 am

I teach AP Stat and am planning on using your example. However, in checking conditions I would like to be able to give background on the origin of the data. I went to your link and found that this data was collected for the TV episodes. Are those the episodes just for the original series?

April 23, 2021 at 11:21 pm

That’s great you’re teaching an AP Stats class! 🙂

Yes, the data I use are from the original TV series that aired from 1966-69.

July 5, 2020 at 12:34 pm

Thank you for your gracious reply. I’m especially happy because it meant that I actually understood! You’ve done a great service with this blog; I plan to return regularly! Thank you.

July 5, 2020 at 5:43 pm

I was think exactly that after fixing the comment. It would make a perfect comprehension test. Read this article and find the two incorrect letters! You passed! 🙂

July 4, 2020 at 9:13 am

I very much appreciate your clear explanations. I’m a “50 something” trying to finish a PhD in Library Science and my brain needs the help!

One question, please?

You write above:

Larger values represent a greater difference between your sample data and the null hypothesis. Chi-squared tests are one-tailed tests rather than the more familiar two-tailed tests. The test determines whether the entire set of differences exceeds a significance threshold. If your χ2 passes the limit, your results are statistically significant! You can reject the null hypothesis and conclude that the variables are independent.

I thought that rejecting the null hypothesis allowed you to conclude the opposite. If the null hypothesis is

Null: The variables are independent. No relationship exists.

Then rejecting the Null hypothesis means rejecting that the variables are independent, not concluding that the variables are independent.

This is, please, a honest question, (not being “that guy”; i’m not smart enough!).

Again, thank you for your work!! I’m going to check to see if you cover Kendall’s W, as it’s central to a paper I’m reading!

July 4, 2020 at 3:08 pm

First, I definitely welcome all questions! And, especially in this case because you caught a typo! You’re correct about what rejecting the null hypothesis means for this test. I’ve updated the text to say “and conclude that the variables are dependent.” I double-checked elsewhere through article and all the other text about the conclusions based on significance are correct. Just a brain malfunction on my part! I’m grateful you caught that as that little slip changes the entire meaning!

Alas, I don’t cover Kendall’s W–at least not yet. I plan to add that down the road.

April 28, 2020 at 7:28 pm

Thanks Jim. Your explanations are so effective, yet easy to understand!

April 26, 2020 at 8:54 pm

Thank you Jim. Great post and reply. I have a question which is an extension of Michael’s question.

In general, it seems like one could build any test statistic. Find the distribution of your statistic under the null (say using bootstrap), and that will give you a p-value for your dataset.

Are chi-squared, t, or F-statistics special in some way? Or do we continue to use them simply because people have used them historically?

April 27, 2020 at 12:31 am

Originally, hypothesis tests that used these distributions were easier to calculate. You could calculate the test statistic using a simple formula and then look it up in a table. Later, it got even easier when the computer could both calculate the test statistic and tell you its p-value. It’s really the ease of calculation that made them special along with the theories behind them.

Now, we have such powerful computers that they can easily construct very large sets of bootstrap samples. That would’ve been difficult earlier. So, a large part of the answer is that bootstrapping really wasn’t feasible earlier and so the use of the chi-squared, t, and F distributions became the norm. The historically accepted standards.

It’s possible that over time bootstrap methods will gain be used more. I haven’t done extensive research into how efficient they are compared to using the various distributions, but what I have done indicates they are at least roughly on par. If you haven’t, I’d suggest reading my post about bootstrapping for more information.

Thanks for asking the great question!

January 31, 2020 at 1:29 am

Nice explanation

January 30, 2020 at 4:21 am

This has started my year, so far so good, Thank you Jim.

January 29, 2020 at 1:32 am

great lesson thanks

January 28, 2020 at 9:24 pm

Thankyou Jim, I will read and calc this lesson today, at 3 o’clock Brasilia time.

January 28, 2020 at 4:40 am

Thank You Sir

January 27, 2020 at 8:49 am

Great post, thanks for writing it. I am looking forward to the Cramer’s V post!

As a person just starting to dive into statistics I am curios why we so often square the differences to make calculations. It seems squaring a difference will put to much weight on large differences. For example, in the chi-square test what if we used the absolute value of observed and expected differences? Just something I have been wondering about.

January 28, 2020 at 11:43 pm

Hi Michael,

There’s several ways of looking at your question. In some cases, if you just want to know how far observations are from the mean for a dataset, you would be justified using the mean absolute deviation rather than the standard deviation, which incorporates squared deviations but then takes the square root.

However, in other cases, the squared deviations are built into the underlying analysis. Such as in linear regression where it penalizes larger errors which helps force them to be smaller. Otherwise, the regression line would not “consider” larger errors to be much worse than smaller errors. Here’s an article about it in the regression context .

Or, if you’re working with the normal distribution and using it calculate probabilities or what not, that distribution has the mean and standard deviation as parameters. And the standard deviation incorporates squared differences. You could not work with the normal distribution using mean absolute deviations (MAD).

In a similar vein for chi-squared tests, you have to realize that the chi-squared distribution is based on squared differences. So, if you wanted to do a similar analysis but with the mean absolute deviation (MAD), you’d have to devise an entirely new test statistic and sampling distribution for it! You couldn’t just use the chi-squared distribution because that is specifically for these differences that use squaring. Same thing for F-tests which use ratios of variances, and variances are of course based on squared differences. Again, to use MAD for something like ANOVA, you’d need to come up with a new test statistic and sampling distribution!

But, the general reason is that squaring does weight large differences more heavily and that fits in with the rational that given a distribution of values, outlier values should be weighted more because they are relatively unlikely to occur so when they do it’s noteworthy. It makes those large differences between the expected and the observed more “odd.” And, some analyses use an underlying sampling distribution that is based on a test statistic calculated using squared differences in some fashion.

January 27, 2020 at 2:08 am

Thank you Jim.

January 27, 2020 at 1:12 am

Great lesson Jim! You’re putting it a very simple ways for non-statisticians. Thanks for sharing the knowledge!

January 26, 2020 at 8:32 pm

Thanks for sharing, Jim!

Comments and Questions Cancel reply

Teach yourself statistics

Chi-Square Test of Independence

This lesson explains how to conduct a chi-square test for independence . The test is applied when you have two categorical variables from a single population. It is used to determine whether there is a significant association between the two variables.

For example, in an election survey, voters might be classified by gender (male or female) and voting preference (Democrat, Republican, or Independent). We could use a chi-square test for independence to determine whether gender is related to voting preference. The sample problem at the end of the lesson considers this example.

When to Use Chi-Square Test for Independence

The test procedure described in this lesson is appropriate when the following conditions are met:

- The sampling method is simple random sampling .

- The variables under study are each categorical .

- If sample data are displayed in a contingency table , the expected frequency count for each cell of the table is at least 5.

This approach consists of four steps: (1) state the hypotheses, (2) formulate an analysis plan, (3) analyze sample data, and (4) interpret results.

State the Hypotheses

Suppose that Variable A has r levels, and Variable B has c levels. The null hypothesis states that knowing the level of Variable A does not help you predict the level of Variable B. That is, the variables are independent.

H o : Variable A and Variable B are independent.

H a : Variable A and Variable B are not independent.

The alternative hypothesis is that knowing the level of Variable A can help you predict the level of Variable B.

Note: Support for the alternative hypothesis suggests that the variables are related; but the relationship is not necessarily causal, in the sense that one variable "causes" the other.

Formulate an Analysis Plan

The analysis plan describes how to use sample data to accept or reject the null hypothesis. The plan should specify the following elements.

- Significance level. Often, researchers choose significance levels equal to 0.01, 0.05, or 0.10; but any value between 0 and 1 can be used.

- Test method. Use the chi-square test for independence to determine whether there is a significant relationship between two categorical variables.

Analyze Sample Data

Using sample data, find the degrees of freedom, expected frequencies, test statistic, and the P-value associated with the test statistic. The approach described in this section is illustrated in the sample problem at the end of this lesson.

DF = (r - 1) * (c - 1)

E r,c = (n r * n c ) / n

Χ 2 = Σ [ (O r,c - E r,c ) 2 / E r,c ]

- P-value. The P-value is the probability of observing a sample statistic as extreme as the test statistic. Since the test statistic is a chi-square, use the Chi-Square Distribution Calculator to assess the probability associated with the test statistic. Use the degrees of freedom computed above.

Interpret Results

If the sample findings are unlikely, given the null hypothesis, the researcher rejects the null hypothesis. Typically, this involves comparing the P-value to the significance level , and rejecting the null hypothesis when the P-value is less than the significance level.

Test Your Understanding

A public opinion poll surveyed a simple random sample of 1000 voters. Respondents were classified by gender (male or female) and by voting preference (Republican, Democrat, or Independent). Results are shown in the contingency table below.

| Voting Preferences | Row total | |||

|---|---|---|---|---|

| Rep | Dem | Ind | ||

| Male | 200 | 150 | 50 | 400 |

| Female | 250 | 300 | 50 | 600 |

| Column total | 450 | 450 | 100 | 1000 |

Is there a gender gap? Do the men's voting preferences differ significantly from the women's preferences? Use a 0.05 level of significance.

The solution to this problem takes four steps: (1) state the hypotheses, (2) formulate an analysis plan, (3) analyze sample data, and (4) interpret results. We work through those steps below:

H o : Gender and voting preferences are independent.

H a : Gender and voting preferences are not independent.

- Formulate an analysis plan . For this analysis, the significance level is 0.05. Using sample data, we will conduct a chi-square test for independence .

DF = (r - 1) * (c - 1) = (2 - 1) * (3 - 1) = 2

E r,c = (n r * n c ) / n E 1,1 = (400 * 450) / 1000 = 180000/1000 = 180 E 1,2 = (400 * 450) / 1000 = 180000/1000 = 180 E 1,3 = (400 * 100) / 1000 = 40000/1000 = 40 E 2,1 = (600 * 450) / 1000 = 270000/1000 = 270 E 2,2 = (600 * 450) / 1000 = 270000/1000 = 270 E 2,3 = (600 * 100) / 1000 = 60000/1000 = 60

Χ 2 = Σ [ (O r,c - E r,c ) 2 / E r,c ] Χ 2 = (200 - 180) 2 /180 + (150 - 180) 2 /180 + (50 - 40) 2 /40 + (250 - 270) 2 /270 + (300 - 270) 2 /270 + (50 - 60) 2 /60 Χ 2 = 400/180 + 900/180 + 100/40 + 400/270 + 900/270 + 100/60 Χ 2 = 2.22 + 5.00 + 2.50 + 1.48 + 3.33 + 1.67 = 16.2

where DF is the degrees of freedom, r is the number of levels of gender, c is the number of levels of the voting preference, n r is the number of observations from level r of gender, n c is the number of observations from level c of voting preference, n is the number of observations in the sample, E r,c is the expected frequency count when gender is level r and voting preference is level c , and O r,c is the observed frequency count when gender is level r voting preference is level c .

The P-value is the probability that a chi-square statistic having 2 degrees of freedom is more extreme than 16.2. We use the Chi-Square Distribution Calculator to find P(Χ 2 > 16.2) = 0.0003.

- Interpret results . Since the P-value (0.0003) is less than the significance level (0.05), we cannot accept the null hypothesis. Thus, we conclude that there is a relationship between gender and voting preference.

Note: If you use this approach on an exam, you may also want to mention why this approach is appropriate. Specifically, the approach is appropriate because the sampling method was simple random sampling, the variables under study were categorical, and the expected frequency count was at least 5 in each cell of the contingency table.

Hypothesis Testing - Chi Squared Test

Lisa Sullivan, PhD

Professor of Biostatistics

Boston University School of Public Health

Introduction

This module will continue the discussion of hypothesis testing, where a specific statement or hypothesis is generated about a population parameter, and sample statistics are used to assess the likelihood that the hypothesis is true. The hypothesis is based on available information and the investigator's belief about the population parameters. The specific tests considered here are called chi-square tests and are appropriate when the outcome is discrete (dichotomous, ordinal or categorical). For example, in some clinical trials the outcome is a classification such as hypertensive, pre-hypertensive or normotensive. We could use the same classification in an observational study such as the Framingham Heart Study to compare men and women in terms of their blood pressure status - again using the classification of hypertensive, pre-hypertensive or normotensive status.

The technique to analyze a discrete outcome uses what is called a chi-square test. Specifically, the test statistic follows a chi-square probability distribution. We will consider chi-square tests here with one, two and more than two independent comparison groups.

Learning Objectives

After completing this module, the student will be able to:

- Perform chi-square tests by hand

- Appropriately interpret results of chi-square tests

- Identify the appropriate hypothesis testing procedure based on type of outcome variable and number of samples

Tests with One Sample, Discrete Outcome

Here we consider hypothesis testing with a discrete outcome variable in a single population. Discrete variables are variables that take on more than two distinct responses or categories and the responses can be ordered or unordered (i.e., the outcome can be ordinal or categorical). The procedure we describe here can be used for dichotomous (exactly 2 response options), ordinal or categorical discrete outcomes and the objective is to compare the distribution of responses, or the proportions of participants in each response category, to a known distribution. The known distribution is derived from another study or report and it is again important in setting up the hypotheses that the comparator distribution specified in the null hypothesis is a fair comparison. The comparator is sometimes called an external or a historical control.

In one sample tests for a discrete outcome, we set up our hypotheses against an appropriate comparator. We select a sample and compute descriptive statistics on the sample data. Specifically, we compute the sample size (n) and the proportions of participants in each response

Test Statistic for Testing H 0 : p 1 = p 10 , p 2 = p 20 , ..., p k = p k0

We find the critical value in a table of probabilities for the chi-square distribution with degrees of freedom (df) = k-1. In the test statistic, O = observed frequency and E=expected frequency in each of the response categories. The observed frequencies are those observed in the sample and the expected frequencies are computed as described below. χ 2 (chi-square) is another probability distribution and ranges from 0 to ∞. The test above statistic formula above is appropriate for large samples, defined as expected frequencies of at least 5 in each of the response categories.

When we conduct a χ 2 test, we compare the observed frequencies in each response category to the frequencies we would expect if the null hypothesis were true. These expected frequencies are determined by allocating the sample to the response categories according to the distribution specified in H 0 . This is done by multiplying the observed sample size (n) by the proportions specified in the null hypothesis (p 10 , p 20 , ..., p k0 ). To ensure that the sample size is appropriate for the use of the test statistic above, we need to ensure that the following: min(np 10 , n p 20 , ..., n p k0 ) > 5.

The test of hypothesis with a discrete outcome measured in a single sample, where the goal is to assess whether the distribution of responses follows a known distribution, is called the χ 2 goodness-of-fit test. As the name indicates, the idea is to assess whether the pattern or distribution of responses in the sample "fits" a specified population (external or historical) distribution. In the next example we illustrate the test. As we work through the example, we provide additional details related to the use of this new test statistic.

A University conducted a survey of its recent graduates to collect demographic and health information for future planning purposes as well as to assess students' satisfaction with their undergraduate experiences. The survey revealed that a substantial proportion of students were not engaging in regular exercise, many felt their nutrition was poor and a substantial number were smoking. In response to a question on regular exercise, 60% of all graduates reported getting no regular exercise, 25% reported exercising sporadically and 15% reported exercising regularly as undergraduates. The next year the University launched a health promotion campaign on campus in an attempt to increase health behaviors among undergraduates. The program included modules on exercise, nutrition and smoking cessation. To evaluate the impact of the program, the University again surveyed graduates and asked the same questions. The survey was completed by 470 graduates and the following data were collected on the exercise question:

|

|

|

|

|

|

| Number of Students | 255 | 125 | 90 | 470 |

Based on the data, is there evidence of a shift in the distribution of responses to the exercise question following the implementation of the health promotion campaign on campus? Run the test at a 5% level of significance.

In this example, we have one sample and a discrete (ordinal) outcome variable (with three response options). We specifically want to compare the distribution of responses in the sample to the distribution reported the previous year (i.e., 60%, 25%, 15% reporting no, sporadic and regular exercise, respectively). We now run the test using the five-step approach.

- Step 1. Set up hypotheses and determine level of significance.

The null hypothesis again represents the "no change" or "no difference" situation. If the health promotion campaign has no impact then we expect the distribution of responses to the exercise question to be the same as that measured prior to the implementation of the program.

H 0 : p 1 =0.60, p 2 =0.25, p 3 =0.15, or equivalently H 0 : Distribution of responses is 0.60, 0.25, 0.15

H 1 : H 0 is false. α =0.05

Notice that the research hypothesis is written in words rather than in symbols. The research hypothesis as stated captures any difference in the distribution of responses from that specified in the null hypothesis. We do not specify a specific alternative distribution, instead we are testing whether the sample data "fit" the distribution in H 0 or not. With the χ 2 goodness-of-fit test there is no upper or lower tailed version of the test.

- Step 2. Select the appropriate test statistic.

The test statistic is:

We must first assess whether the sample size is adequate. Specifically, we need to check min(np 0 , np 1, ..., n p k ) > 5. The sample size here is n=470 and the proportions specified in the null hypothesis are 0.60, 0.25 and 0.15. Thus, min( 470(0.65), 470(0.25), 470(0.15))=min(282, 117.5, 70.5)=70.5. The sample size is more than adequate so the formula can be used.

- Step 3. Set up decision rule.

The decision rule for the χ 2 test depends on the level of significance and the degrees of freedom, defined as degrees of freedom (df) = k-1 (where k is the number of response categories). If the null hypothesis is true, the observed and expected frequencies will be close in value and the χ 2 statistic will be close to zero. If the null hypothesis is false, then the χ 2 statistic will be large. Critical values can be found in a table of probabilities for the χ 2 distribution. Here we have df=k-1=3-1=2 and a 5% level of significance. The appropriate critical value is 5.99, and the decision rule is as follows: Reject H 0 if χ 2 > 5.99.

- Step 4. Compute the test statistic.

We now compute the expected frequencies using the sample size and the proportions specified in the null hypothesis. We then substitute the sample data (observed frequencies) and the expected frequencies into the formula for the test statistic identified in Step 2. The computations can be organized as follows.

|

|

|

|

|

|

|---|---|---|---|---|

|

| 255 | 125 | 90 | 470 |

|

| 470(0.60) =282 | 470(0.25) =117.5 | 470(0.15) =70.5 | 470 |

Notice that the expected frequencies are taken to one decimal place and that the sum of the observed frequencies is equal to the sum of the expected frequencies. The test statistic is computed as follows:

- Step 5. Conclusion.

We reject H 0 because 8.46 > 5.99. We have statistically significant evidence at α=0.05 to show that H 0 is false, or that the distribution of responses is not 0.60, 0.25, 0.15. The p-value is p < 0.005.

In the χ 2 goodness-of-fit test, we conclude that either the distribution specified in H 0 is false (when we reject H 0 ) or that we do not have sufficient evidence to show that the distribution specified in H 0 is false (when we fail to reject H 0 ). Here, we reject H 0 and concluded that the distribution of responses to the exercise question following the implementation of the health promotion campaign was not the same as the distribution prior. The test itself does not provide details of how the distribution has shifted. A comparison of the observed and expected frequencies will provide some insight into the shift (when the null hypothesis is rejected). Does it appear that the health promotion campaign was effective?

Consider the following:

|

|

|

|

|

|

|---|---|---|---|---|

|

| 255 | 125 | 90 | 470 |

|

| 282 | 117.5 | 70.5 | 470 |

If the null hypothesis were true (i.e., no change from the prior year) we would have expected more students to fall in the "No Regular Exercise" category and fewer in the "Regular Exercise" categories. In the sample, 255/470 = 54% reported no regular exercise and 90/470=19% reported regular exercise. Thus, there is a shift toward more regular exercise following the implementation of the health promotion campaign. There is evidence of a statistical difference, is this a meaningful difference? Is there room for improvement?

The National Center for Health Statistics (NCHS) provided data on the distribution of weight (in categories) among Americans in 2002. The distribution was based on specific values of body mass index (BMI) computed as weight in kilograms over height in meters squared. Underweight was defined as BMI< 18.5, Normal weight as BMI between 18.5 and 24.9, overweight as BMI between 25 and 29.9 and obese as BMI of 30 or greater. Americans in 2002 were distributed as follows: 2% Underweight, 39% Normal Weight, 36% Overweight, and 23% Obese. Suppose we want to assess whether the distribution of BMI is different in the Framingham Offspring sample. Using data from the n=3,326 participants who attended the seventh examination of the Offspring in the Framingham Heart Study we created the BMI categories as defined and observed the following:

|

|

|

|

|

30 |

|

|---|---|---|---|---|---|

|

| 20 | 932 | 1374 | 1000 | 3326 |

- Step 1. Set up hypotheses and determine level of significance.

H 0 : p 1 =0.02, p 2 =0.39, p 3 =0.36, p 4 =0.23 or equivalently

H 0 : Distribution of responses is 0.02, 0.39, 0.36, 0.23

H 1 : H 0 is false. α=0.05

The formula for the test statistic is:

We must assess whether the sample size is adequate. Specifically, we need to check min(np 0 , np 1, ..., n p k ) > 5. The sample size here is n=3,326 and the proportions specified in the null hypothesis are 0.02, 0.39, 0.36 and 0.23. Thus, min( 3326(0.02), 3326(0.39), 3326(0.36), 3326(0.23))=min(66.5, 1297.1, 1197.4, 765.0)=66.5. The sample size is more than adequate, so the formula can be used.

Here we have df=k-1=4-1=3 and a 5% level of significance. The appropriate critical value is 7.81 and the decision rule is as follows: Reject H 0 if χ 2 > 7.81.

We now compute the expected frequencies using the sample size and the proportions specified in the null hypothesis. We then substitute the sample data (observed frequencies) into the formula for the test statistic identified in Step 2. We organize the computations in the following table.

|

|

|

|

|

30 |

|

|---|---|---|---|---|---|

|

| 20 | 932 | 1374 | 1000 | 3326 |

|

| 66.5 | 1297.1 | 1197.4 | 765.0 | 3326 |

The test statistic is computed as follows:

We reject H 0 because 233.53 > 7.81. We have statistically significant evidence at α=0.05 to show that H 0 is false or that the distribution of BMI in Framingham is different from the national data reported in 2002, p < 0.005.

Again, the χ 2 goodness-of-fit test allows us to assess whether the distribution of responses "fits" a specified distribution. Here we show that the distribution of BMI in the Framingham Offspring Study is different from the national distribution. To understand the nature of the difference we can compare observed and expected frequencies or observed and expected proportions (or percentages). The frequencies are large because of the large sample size, the observed percentages of patients in the Framingham sample are as follows: 0.6% underweight, 28% normal weight, 41% overweight and 30% obese. In the Framingham Offspring sample there are higher percentages of overweight and obese persons (41% and 30% in Framingham as compared to 36% and 23% in the national data), and lower proportions of underweight and normal weight persons (0.6% and 28% in Framingham as compared to 2% and 39% in the national data). Are these meaningful differences?

In the module on hypothesis testing for means and proportions, we discussed hypothesis testing applications with a dichotomous outcome variable in a single population. We presented a test using a test statistic Z to test whether an observed (sample) proportion differed significantly from a historical or external comparator. The chi-square goodness-of-fit test can also be used with a dichotomous outcome and the results are mathematically equivalent.

In the prior module, we considered the following example. Here we show the equivalence to the chi-square goodness-of-fit test.

The NCHS report indicated that in 2002, 75% of children aged 2 to 17 saw a dentist in the past year. An investigator wants to assess whether use of dental services is similar in children living in the city of Boston. A sample of 125 children aged 2 to 17 living in Boston are surveyed and 64 reported seeing a dentist over the past 12 months. Is there a significant difference in use of dental services between children living in Boston and the national data?

We presented the following approach to the test using a Z statistic.

- Step 1. Set up hypotheses and determine level of significance

H 0 : p = 0.75

H 1 : p ≠ 0.75 α=0.05

We must first check that the sample size is adequate. Specifically, we need to check min(np 0 , n(1-p 0 )) = min( 125(0.75), 125(1-0.75))=min(94, 31)=31. The sample size is more than adequate so the following formula can be used

This is a two-tailed test, using a Z statistic and a 5% level of significance. Reject H 0 if Z < -1.960 or if Z > 1.960.

We now substitute the sample data into the formula for the test statistic identified in Step 2. The sample proportion is:

We reject H 0 because -6.15 < -1.960. We have statistically significant evidence at a =0.05 to show that there is a statistically significant difference in the use of dental service by children living in Boston as compared to the national data. (p < 0.0001).

We now conduct the same test using the chi-square goodness-of-fit test. First, we summarize our sample data as follows:

|

| Saw a Dentist in Past 12 Months | Did Not See a Dentist in Past 12 Months | Total |

|---|---|---|---|

| # of Participants | 64 | 61 | 125 |

H 0 : p 1 =0.75, p 2 =0.25 or equivalently H 0 : Distribution of responses is 0.75, 0.25

We must assess whether the sample size is adequate. Specifically, we need to check min(np 0 , np 1, ...,np k >) > 5. The sample size here is n=125 and the proportions specified in the null hypothesis are 0.75, 0.25. Thus, min( 125(0.75), 125(0.25))=min(93.75, 31.25)=31.25. The sample size is more than adequate so the formula can be used.

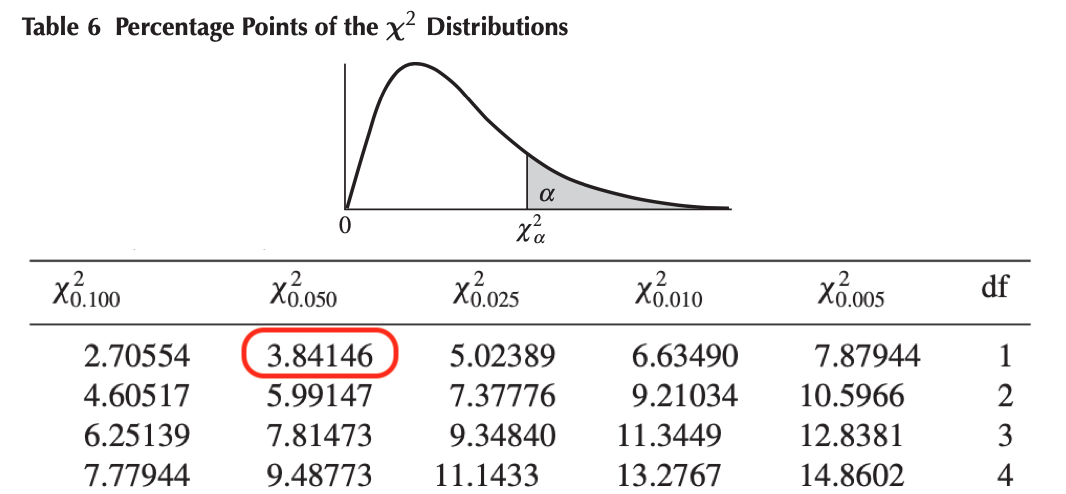

Here we have df=k-1=2-1=1 and a 5% level of significance. The appropriate critical value is 3.84, and the decision rule is as follows: Reject H 0 if χ 2 > 3.84. (Note that 1.96 2 = 3.84, where 1.96 was the critical value used in the Z test for proportions shown above.)

|

|

|

|

|

|---|---|---|---|

|

| 64 | 61 | 125 |

|

| 93.75 | 31.25 | 125 |

(Note that (-6.15) 2 = 37.8, where -6.15 was the value of the Z statistic in the test for proportions shown above.)

We reject H 0 because 37.8 > 3.84. We have statistically significant evidence at α=0.05 to show that there is a statistically significant difference in the use of dental service by children living in Boston as compared to the national data. (p < 0.0001). This is the same conclusion we reached when we conducted the test using the Z test above. With a dichotomous outcome, Z 2 = χ 2 ! In statistics, there are often several approaches that can be used to test hypotheses.

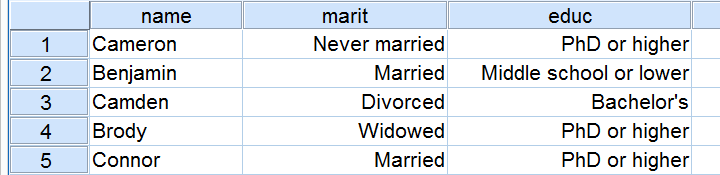

Tests for Two or More Independent Samples, Discrete Outcome

Here we extend that application of the chi-square test to the case with two or more independent comparison groups. Specifically, the outcome of interest is discrete with two or more responses and the responses can be ordered or unordered (i.e., the outcome can be dichotomous, ordinal or categorical). We now consider the situation where there are two or more independent comparison groups and the goal of the analysis is to compare the distribution of responses to the discrete outcome variable among several independent comparison groups.

The test is called the χ 2 test of independence and the null hypothesis is that there is no difference in the distribution of responses to the outcome across comparison groups. This is often stated as follows: The outcome variable and the grouping variable (e.g., the comparison treatments or comparison groups) are independent (hence the name of the test). Independence here implies homogeneity in the distribution of the outcome among comparison groups.

The null hypothesis in the χ 2 test of independence is often stated in words as: H 0 : The distribution of the outcome is independent of the groups. The alternative or research hypothesis is that there is a difference in the distribution of responses to the outcome variable among the comparison groups (i.e., that the distribution of responses "depends" on the group). In order to test the hypothesis, we measure the discrete outcome variable in each participant in each comparison group. The data of interest are the observed frequencies (or number of participants in each response category in each group). The formula for the test statistic for the χ 2 test of independence is given below.

Test Statistic for Testing H 0 : Distribution of outcome is independent of groups

and we find the critical value in a table of probabilities for the chi-square distribution with df=(r-1)*(c-1).

Here O = observed frequency, E=expected frequency in each of the response categories in each group, r = the number of rows in the two-way table and c = the number of columns in the two-way table. r and c correspond to the number of comparison groups and the number of response options in the outcome (see below for more details). The observed frequencies are the sample data and the expected frequencies are computed as described below. The test statistic is appropriate for large samples, defined as expected frequencies of at least 5 in each of the response categories in each group.

The data for the χ 2 test of independence are organized in a two-way table. The outcome and grouping variable are shown in the rows and columns of the table. The sample table below illustrates the data layout. The table entries (blank below) are the numbers of participants in each group responding to each response category of the outcome variable.